Early on in the AI journey, tools like Grammarly, Hemingway, and Yoast helped analyze content to check spelling, simplify language, and provide SEO suggestions.

ChatGPT came along ans started producing apparently intelligent articles in seconds.

But while all of these AI tools can be helpful, they can also create problems… Autocorrect, anyone?

Rise of the machines

The first efforts at text analysis started back in 1975 when the U.S. Navy developed the Flesch-Kincaid readability test to indicate the difficulty level of an English text. The higher the score, the easier it is to read. Most U.S. presidential candidates’ speeches are intentionally written to a grade 6-8 level.

The Flesch-Kincaid scale is now bundled in most word-processing programs, including Microsoft Word, Apple Pages, and tools like Yoast and Grammarly.

Those latter two catch their fair share of mistakes and can definitely assist in writing. But you can’t rely on them alone to produce high-quality content.

Grammarly can be persnickety about passive voice, split infinitives, and uncertain pronouns. But as one user noted, the program often glosses over missing articles, misplaced possessives, and prepositions. As for passive voice — using it in prose isn’t always bad grammar, but AI loves to bring the hammer down. Slate Magazine’s Yascha Mounk once pointed out to Grammarly developers that Hemingway loved passive voice and was awarded the Nobel Prize for literature in 1954. So maybe leave some wiggle room.

Regarding SEO, chasing all the green lights on Yoast won’t guarantee results. In fact, it may produce the opposite of high-quality content: spammy content.

Yoast’s SEO analysis doesn’t account for well-written headlines or click-through rates, only detects exact-match keywords, and encourages keyword density in ways that Google now actively penalizes. Organic ranking — the kind financial content marketers want — is determined by Google’s algorithm and its continuously improving understanding of language and intent.

And as AI answer engines or AEO increasingly surface financial content in response to user queries, the standards for what gets cited are higher than ever. Answer engines favor content that is precise, consistently structured, and demonstrably authoritative — exactly the qualities that AI-generated workslop lacks. We covered what that means for financial content teams in our recent piece on answer engine optimization.

To be perfectly clear

Clear and simple writing is every content marketer’s goal, but fifth- and sixth-graders aren’t the target audience for most financial content.

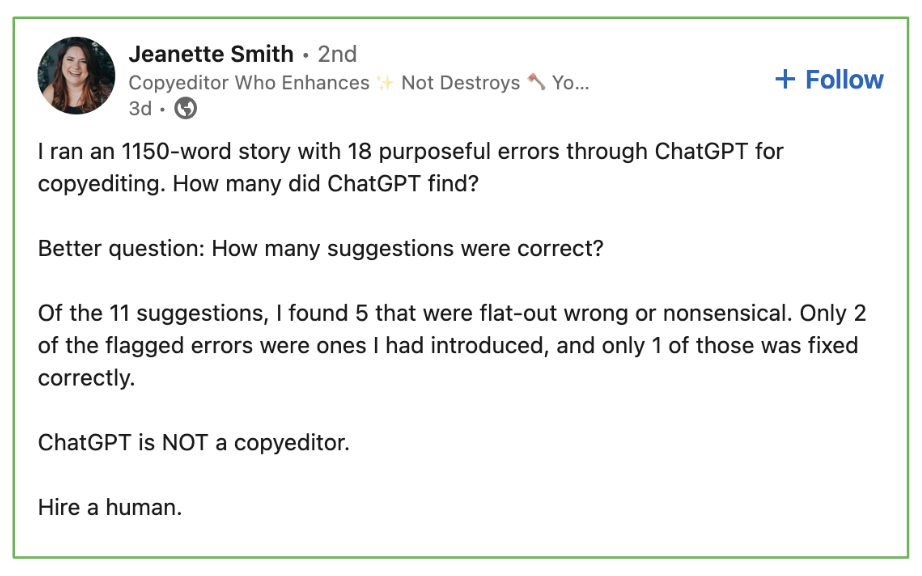

Will ChatGPT and the like ever fully wrap their neural networks around the marketing, technical, and compliance nuances required for great financial content? Probably not. But that doesn’t mean AI can’t help with some of the work. Until AI stops hallucinating and offering legally questionable information, you will need human writers with real-world finance experience doing most of the heavy lifting.

Talented human editors help ensure your content is compelling and compliant once AI tools have done their part.

What does ChatGPT say (2024)

Back in 2024, we asked ChatGPT to explain why humans shouldn’t rely on it to write about complex financial topics. Here is that unedited response.

“There are several reasons why relying on AI, including models like me, for complex financial topics might not be the best approach:

1. Accuracy and Timeliness: My training data only goes up until a certain point in time, so I don’t have access to real-time financial data, market trends, or recent economic events. Financial markets are dynamic, and information can become outdated quickly.

2. Contextual Understanding: While I can provide general information based on historical data, my understanding of specific current market conditions or individual financial situations is limited. Financial advice often requires a deep understanding of an individual’s personal circumstances, goals, and risk tolerance, which AI cannot fully comprehend.

3. Regulatory Compliance and Legal Advice: Financial advice is heavily regulated, and providing personalized investment advice typically requires certification or licensure. I cannot provide advice that complies with current regulations or adapt to changes in laws over time.

4. Complexity of Financial Products: The financial world includes a vast array of products and instruments, each with its own rules, risks, and opportunities. Nuanced advice on investment strategies, tax implications, or financial planning requires expertise beyond what AI can offer.

5. Ethical Considerations: Financial decisions can have a significant impact on an individual’s life. These decisions should be made with careful consideration and input from qualified professionals.

6. Personalization: Financial planning is highly personal and depends on individual goals, risk tolerance, and financial situation. AI can provide general information but lacks the ability to offer personalized guidance that adapts to changing needs.

In summary, while I can be a valuable tool for providing general information on financial topics, it is essential to consult with qualified financial professionals for advice that is accurate, up-to-date, personalized, and compliant with current regulations.”

Claude.ai’s 2026 review

That response was accurate then and largely holds up now.

But two years of widespread AI adoption in content marketing have surfaced a problem the 2024 version of this article didn’t anticipate.

The issue isn’t just hallucination — it’s workslop.

BetterUp Labs and Stanford’s Social Media Lab found that 40% of U.S. desk workers encountered AI-generated content in the past month that read well but contained errors serious enough to derail real work — a phenomenon they named workslop. Cleanup averaged nearly two hours per incident. CNBC’s coverage of the research noted the problem is especially prominent in professional services, which is exactly where financial content lives.

The content wasn’t obviously wrong. It was polished enough to pass a quick review, specific enough to seem credible, and wrong in ways that only a subject matter expert would catch.

In financial services, that gap between plausible and accurate is where compliance risk lives.

The other thing that’s changed is the volume. In 2024, AI-assisted content was something some teams were experimenting with. In 2026, it is standard practice across most marketing functions.

That means the financial content landscape is increasingly populated by material that sounds authoritative, covers all the expected points, and lacks the judgment, sourcing, and genuine expertise that makes financial content credible and defensible.

The case for human writers with real financial experience isn’t that AI can’t produce fluent prose. It clearly can. The case is that fluent prose and accurate, compliant, trustworthy financial content are not the same thing — and in this category, the difference matters.